My Homelab Overview

This is my homelab playground. What started as a few mini PCs on a desk has grown into a full server rack. I recently upgraded to a proper rack setup with dedicated networking, a rackmount server, UPS, and structured cabling. It's my space to mess with Proxmox, AI, Kubernetes, Docker, Linux and whatever new shiny tech catches my eye...

Almost all here was bought used and eBay is my best friend. I like to spend as little as possible, and learn as much as I can on many different platforms.

I started this as a fun side project during lockdown, and it's kinda grown from there. Now I've got it running backups, a media server, CI/CD pipelines, k8s cluster, Local LLM, monitoring dashboards, logs collections, private VPNs etc. I break it, fix it, and learn something new almost every week. Here's what I'm running...

Hardware Setup

Current Rack Setup

- Server Rack: NavePoint 25U open rack with vertical cable management and structured cabling

- Dell Monitor: Dell 22" for direct KVM/console access

- HP EliteDesk 800 G5 Mini PCs (x4): Rack-shelf mounted, Proxmox/Kubernetes nodes (32GB RAM each). Picked up used on eBay, tiny and surprisingly capable.

- Network Switch/Firewall: Ubiquiti UniFi Switch 1U silver

- Patch Panel: 24-port Cat6 keystone patch panel

- Rackmount Server: DELL R620 Server Dual Xeon E5-2620 12Cores 64GB RAM 2x 900GB SAS

- PDU: CyberPower rack-mount power distribution

- Rack-Mount UPS: APC Smart-UPS 2U rackmount power supply

- Mesh WiFi Node: Xfinity mesh access point

Additional Nodes

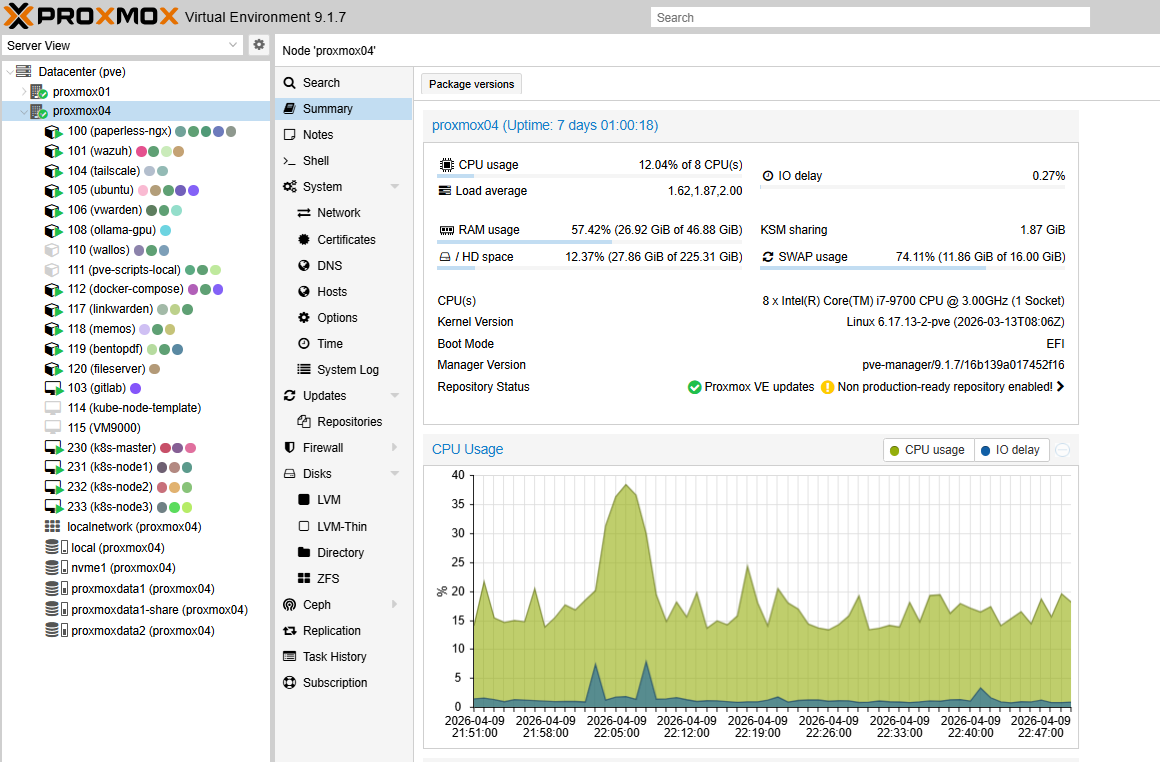

- AI / Proxmox node: Dell Precision 3630 with 48GB RAM and an NVIDIA GTX 3060 GPU. This node runs Ollama, Open WebUI, and Paperless-NGX AI processing. See the full write-up in my Local AI with Ollama article.

- Proxmox backup server (PBS): HP EliteDesk mini 8GB RAM

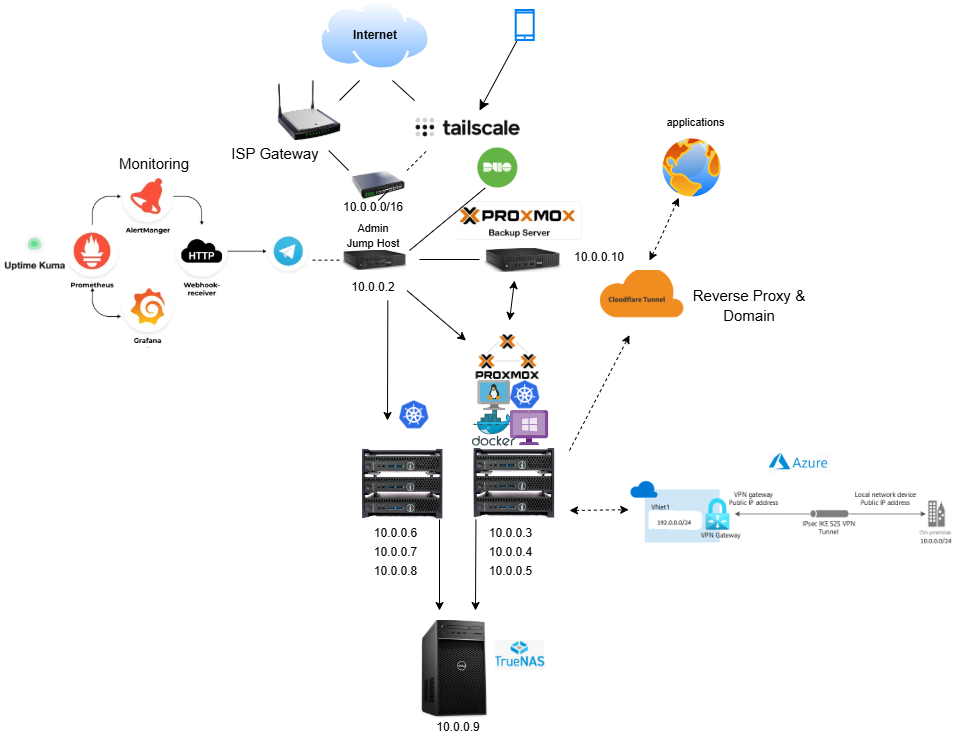

Network Diagram

Homelab networking architecture overview (click to expand)

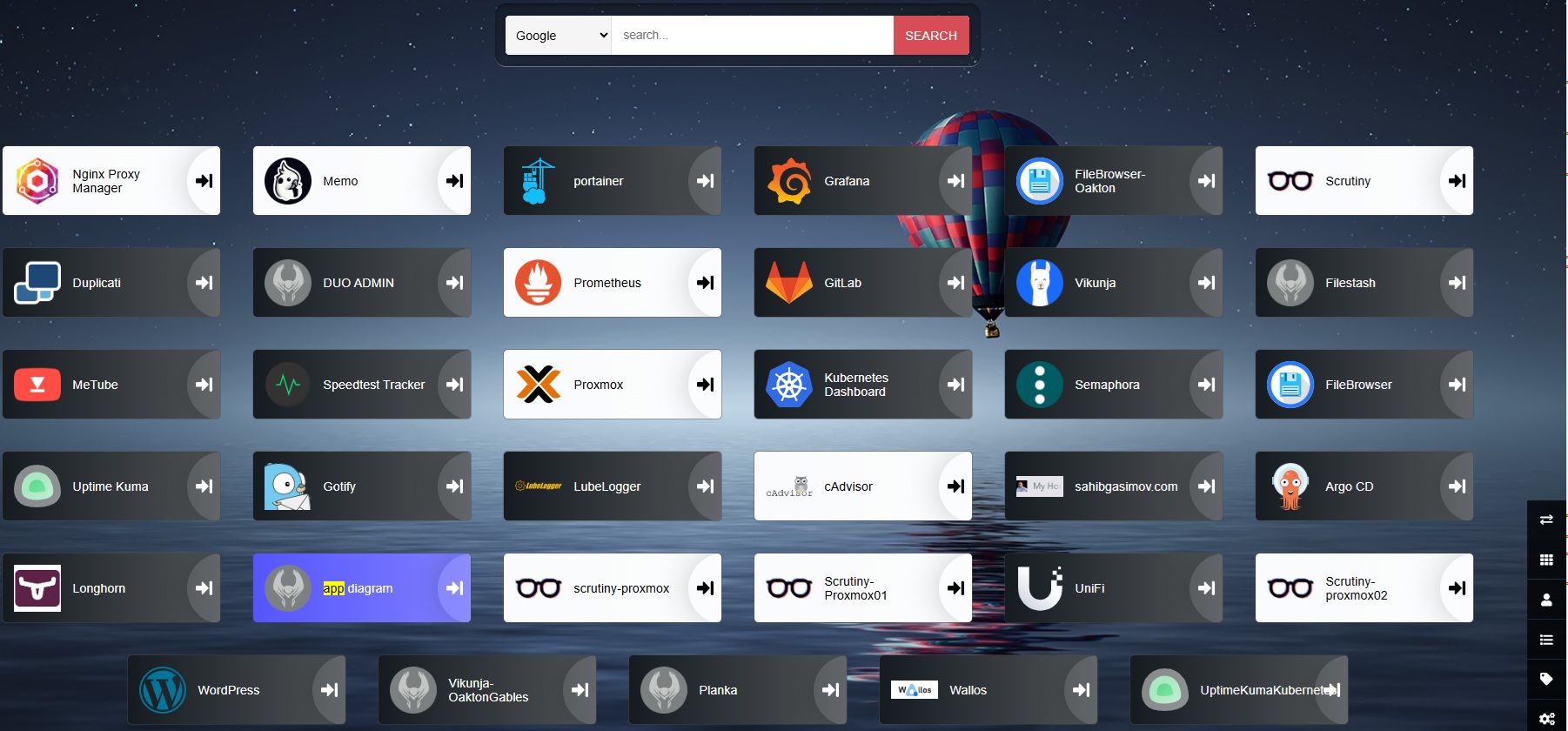

What's actually running on my home lab

These days my homelab is a bit of a mess. Some dev environments for side projects, Kubernetes clusters, GitOps, Local LLM, workflows, CI/CD pipelines, monitoring tools, a bunch of self-hosted web apps and database for a local volunteer group I help with. Honestly, half the fun is seeing what new thing I can get running on it next. Below is a Heimdall dashboard it's an open source dashboard that manages all my applications. It has a nice organized interface for launching my applications that I can customize directly from the app. I use it to record all services running on my home lab, their URLs, and login credentials. There are some apps I use with the same IP pod different ports, so remembering all of them would be a pain..

Most of my homelab apps are deployed either via Proxmox VM, LXC or run on my Kubernetes cluster. The number of applications changes over time, but there are several core services I consistently maintain.

I previously used Docker Compose containers for everything, I still use it for some workloads, but I have now migrated most of my apps inside Kubernetes. It's still ongoing, not everything fits perfectly into Kubernetes, but moving more workloads this helps me build skills that I not always touch at work but can bring to work, or translate directly to modern cloud-native environments .

LXC Containers vs Kubernetes

Not everything needs to run on Kubernetes.. I split my workloads between LXC containers on Proxmox and a Kubernetes cluster depending on what makes sense for each service as well as my own learning goals.

LXC containers on Proxmox are lightweight, fast to spin up, and share the host kernel. They work well for stateful services that need direct hardware access (like GPU passthrough for Ollama), apps that don't need horizontal scaling, or services where I want full OS-level control without the overhead of a full VM. Backups and snapshots are handled natively by Proxmox, and restoring a broken LXC takes seconds.

The other advantage of LXC is Proxmox Community Helper Scripts. These are pre-built LXC stacks that can be run as a script with ready application.

Services running on LXC: Paperless-NGX, Ollama LLM, Wazuh, GitLab, Vaultwarden, Tailscale, Beszel, Docker Compose workloads, WalOS, Linkwarden, Memos, Stirling-PDF, Kasm, and several others.

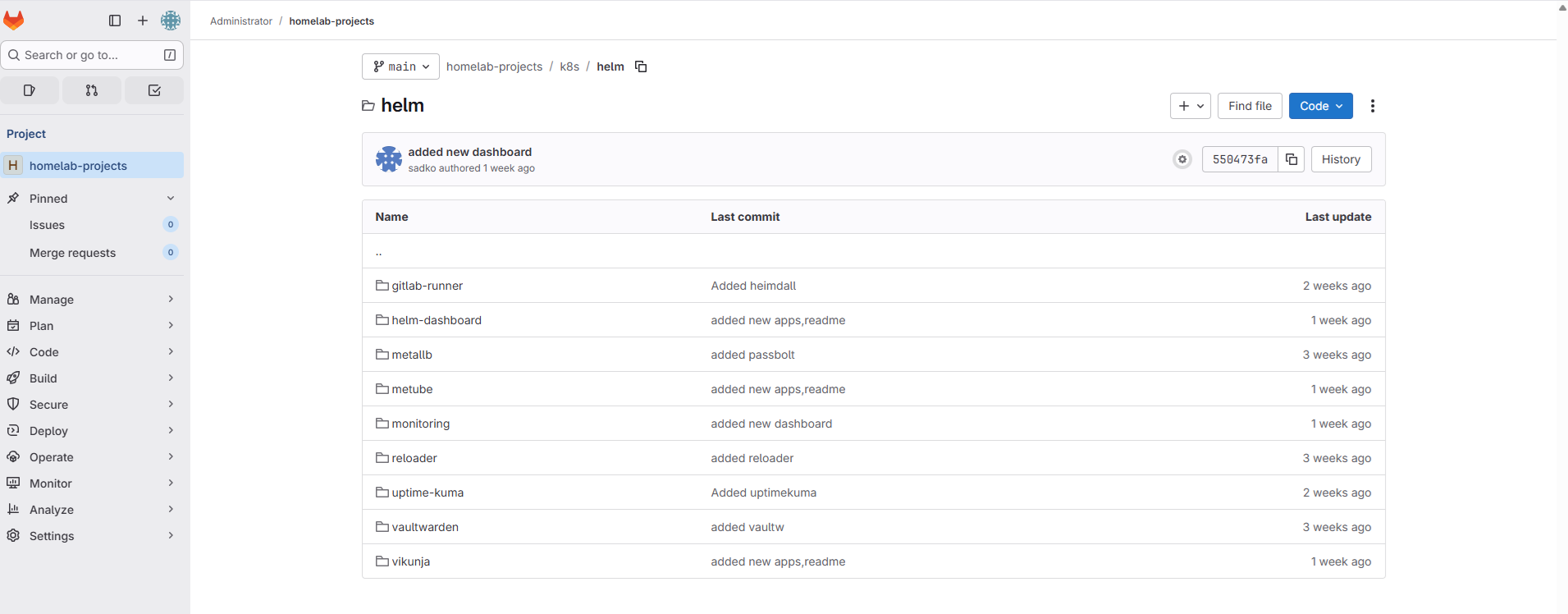

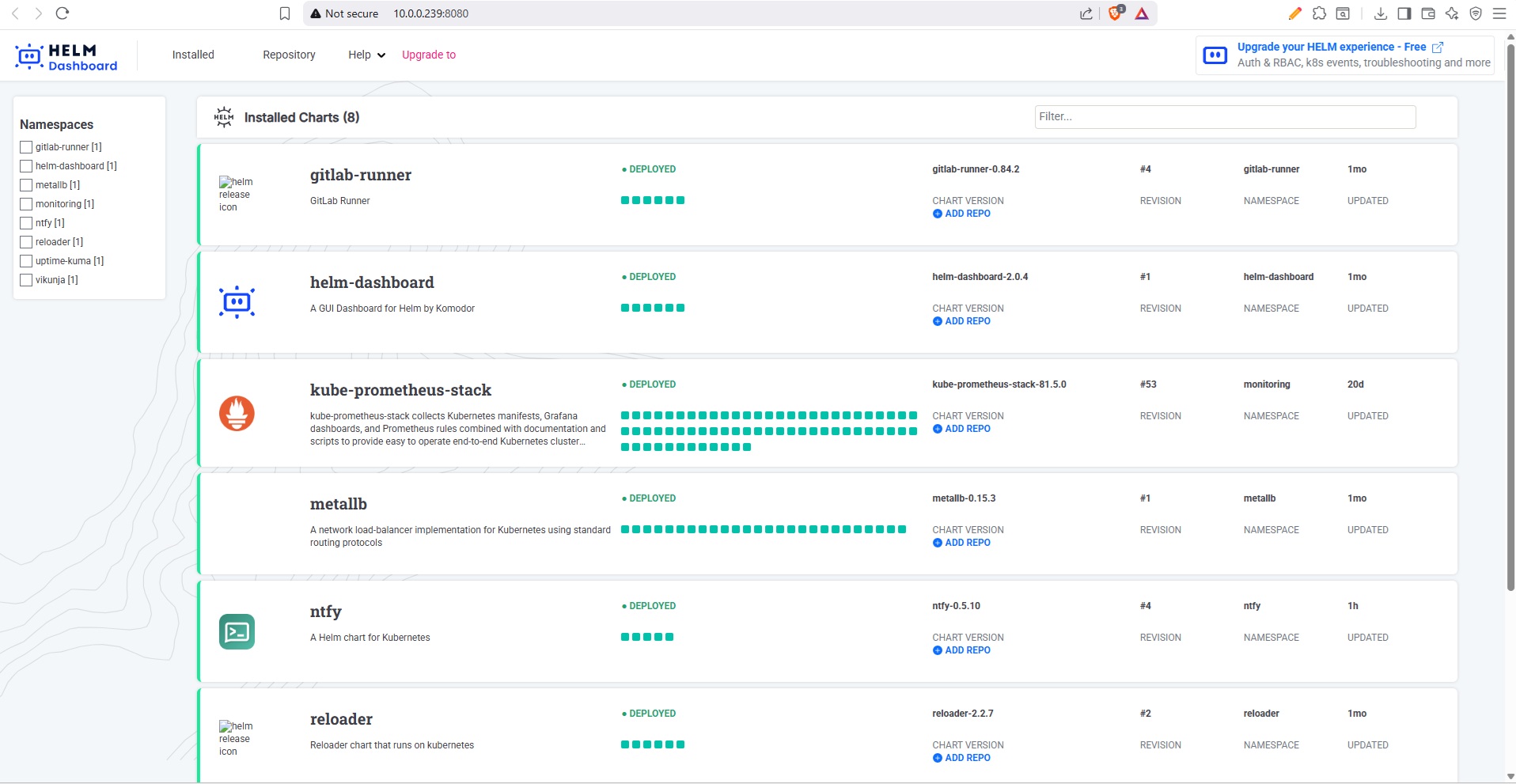

Kubernetes gives me declarative deployments, Helm-based package management, rolling updates, and a GitOps workflow through ArgoCD. For services where I want reproducible deploys, easy upgrades, and centralized monitoring, Kubernetes is the better fit. It is also the platform I work with professionally, so running it in my homelab keeps my skills sharp.

Current Helm releases on my cluster:

- ArgoCD - GitOps continuous delivery

- GitLab Runner - CI/CD pipeline execution

- Helm Dashboard - web UI for Helm releases

- kube-prometheus-stack - Prometheus, Grafana, Alertmanager

- MetalLB - bare-metal load balancer

- ntfy - push notifications for cron jobs

- Reloader - auto-restart pods on config changes

- Semaphore - Ansible automation UI

- Uptime Kuma - service monitoring

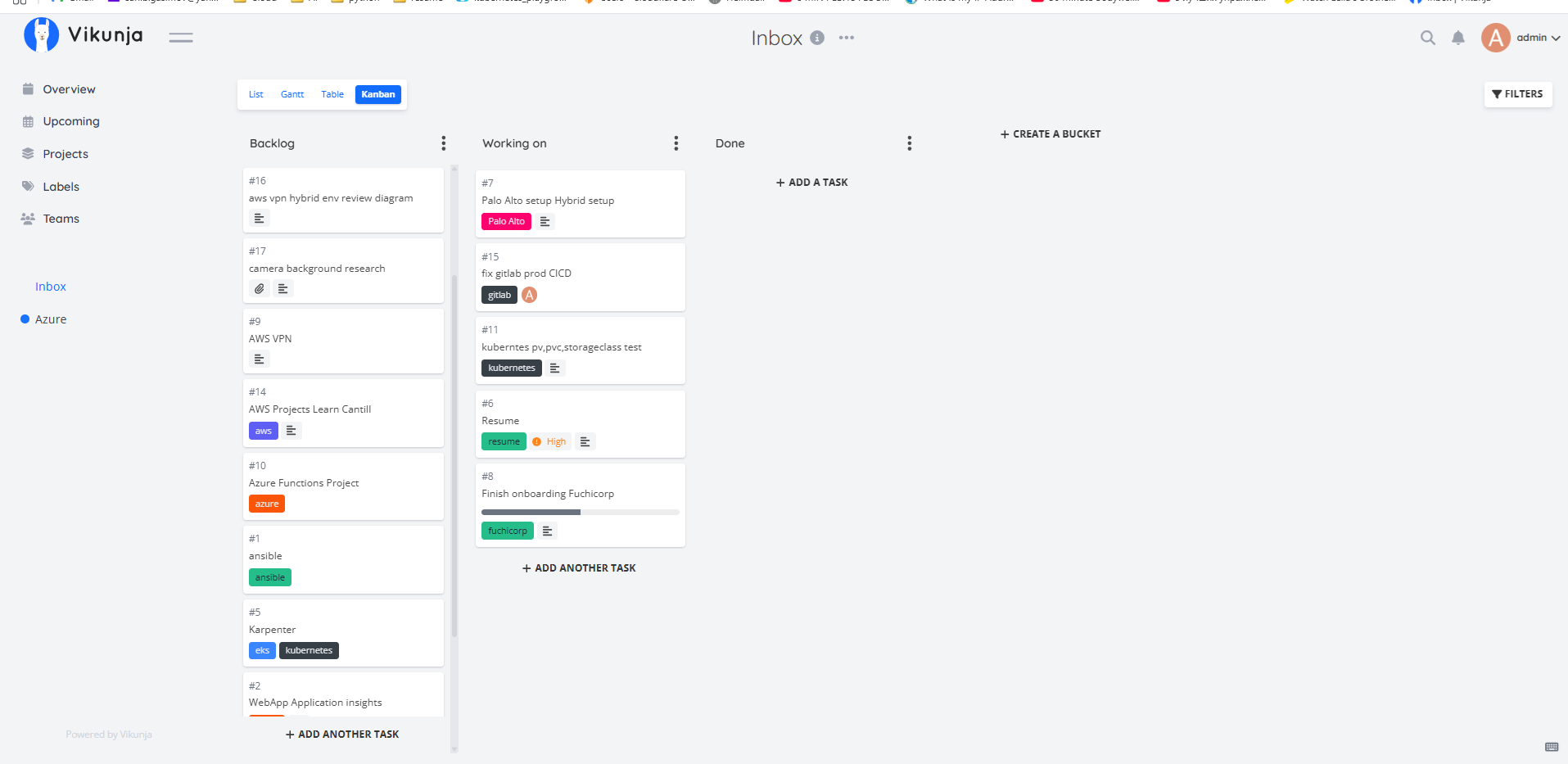

- Vikunja - task management / Kanban board

Homelab's Core

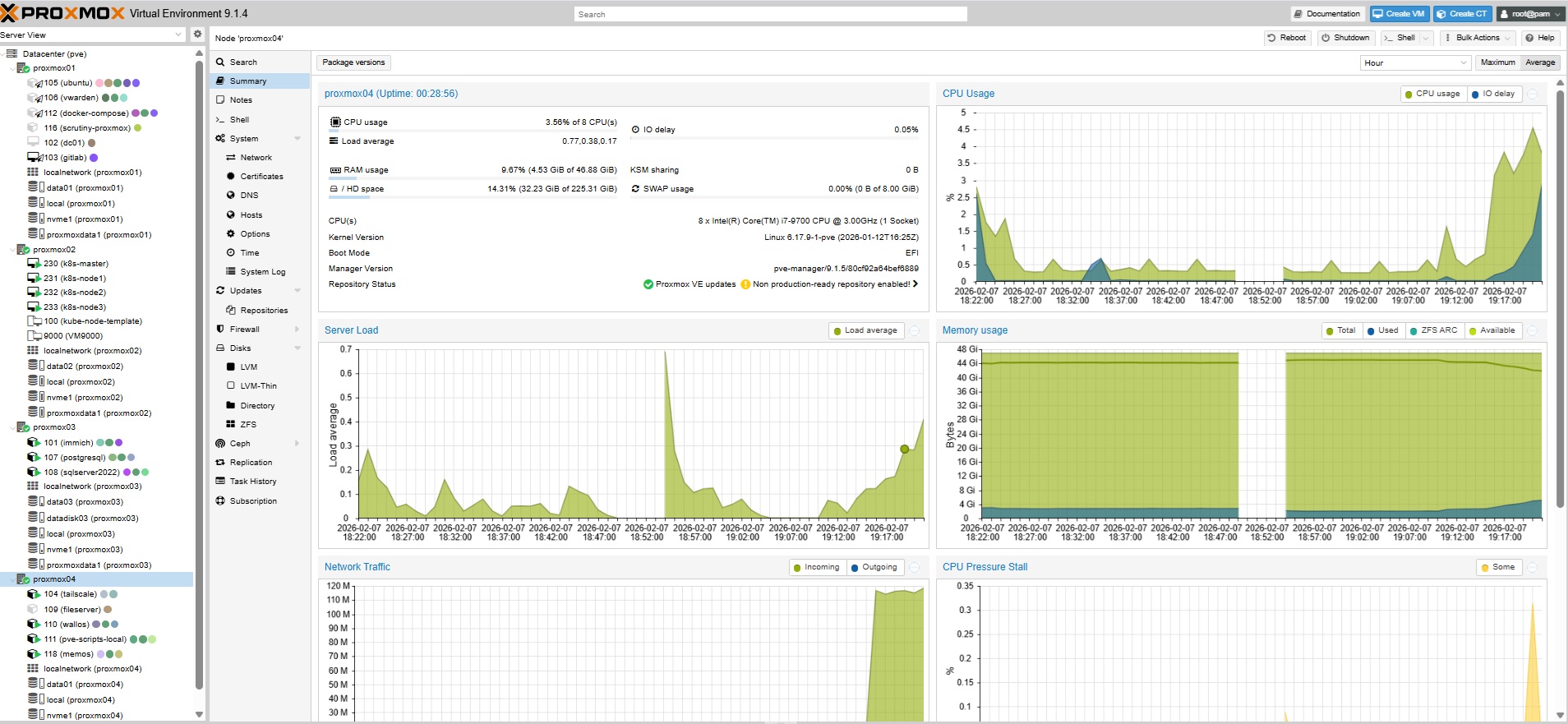

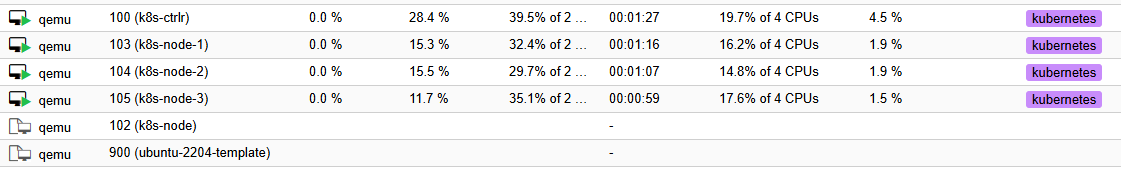

1) Proxmox Cluster - One of the reason I started exploring Proxmox virtualization initially was to set up a home Kubernetes cluster.

I've started from a single node, but with the time it grew to three nodes. Running Kubernetes in the cloud is expensive, so I was looking for a cost effective and free alternative. I did try running K8s bare metal on the mini PCs, but keeping them all updated and healthy was kind of a pain... Proxmox VMs made life much easier, cosidering snapshots are so easy to take and restore. For this setup Proxmox worked well. I spun up my first control plane afer watching LearnLinux TV tutorial, which I still highly recommend to everyone starting from the scratch Build a Kubernetes Cluster on Proxmox

2) Proxmox Backup Server - nothing specific to say about it, just a great tool for backing up and restoring VMs. I've already needed it a few times after messing up configs. Totally a lifesaver..

3) TrueNAS - It is currently running on Dell Tower 3630 with four 4TB disks. One of the best Pro Dell Tower I ever had. The initial idea was to have a basic shared network files , where I can store my personal and family stuff. I started with samba running on Ubuntu server. But eventually I decided to have something that would allow me to create and manage storage pools, shares, and backups .

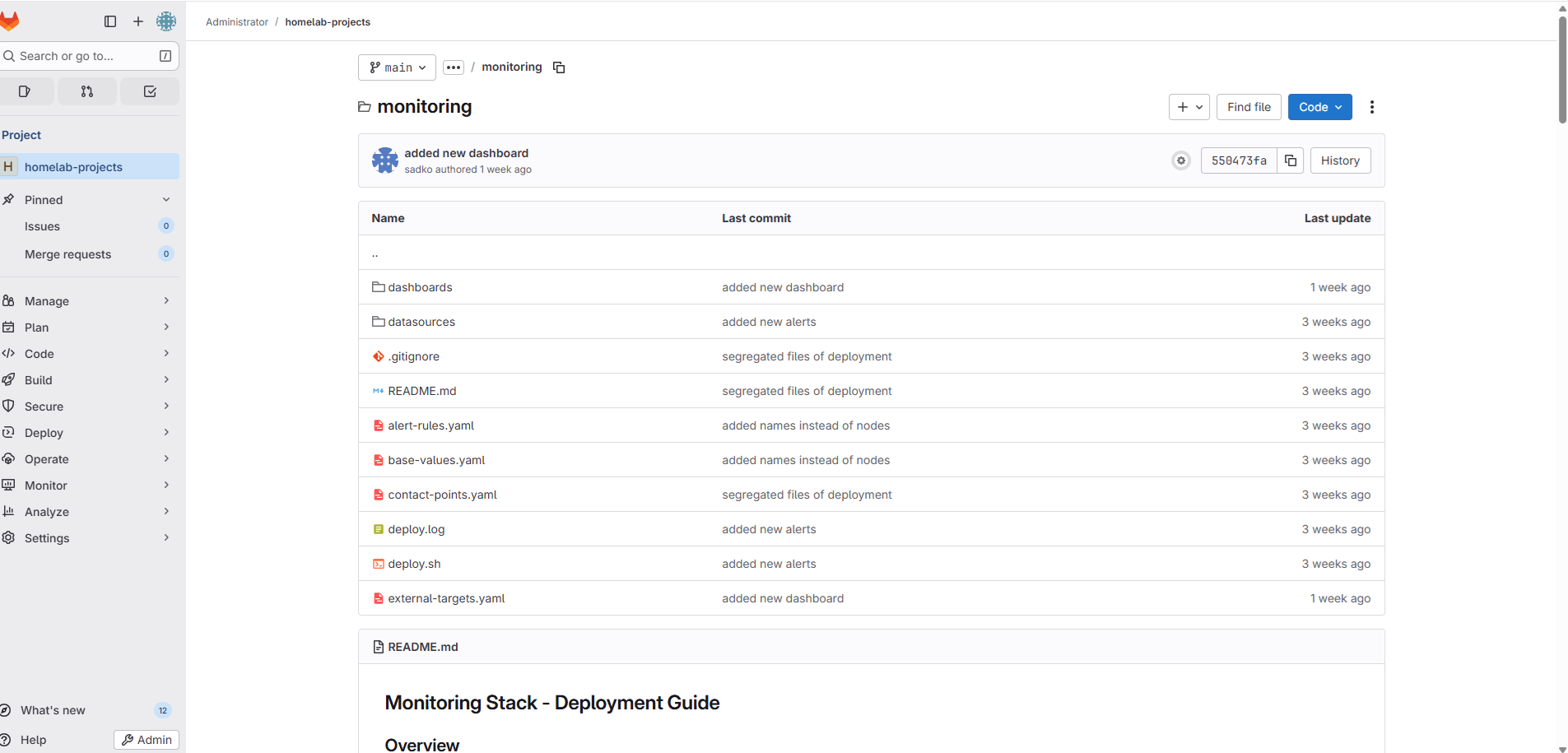

4) GitLab - I used to run Gitlab server on docker-compose. The pros was definetely the speed and job processing time, however one day all of sudden it just crashed and it took me a lot of efforts to retrieve the data back. SIince then I've moved Gitlab to a Proxmox VM, I have backups, snapshots on smb share drive, which makes my life much easier. I also run a GitLab Runner on Kubernetes via Helm, which handles my CI/CD pipelines for deploying apps to the cluster.

I kept losing the code of my apps and artifacts in different folders, so I started managing all of my Kubernetes applications with helm charts and Terraform. This way I can easier manage and deploy my applications, and re-use the code faster if needed. I also use GitOps approach to manage my Kubernetes cluster. I have a separate GitLab repository for my Kubernetes cluster and I use ArgoCD to manage the deployment of my applications.

5) Ollama - My Local LLM server running on my Dell Precision 3630 with GPU passthrough to an LXC container. I use it for document processing via Paperless-NGX, as a coding assistant as well as in VS Code through the Continue.dev extension, and as a general chat interface through Open WebUI. Everything runs on the RTX 3060 with no cloud API calls. For the full setup details, see my Local AI with Ollama article.

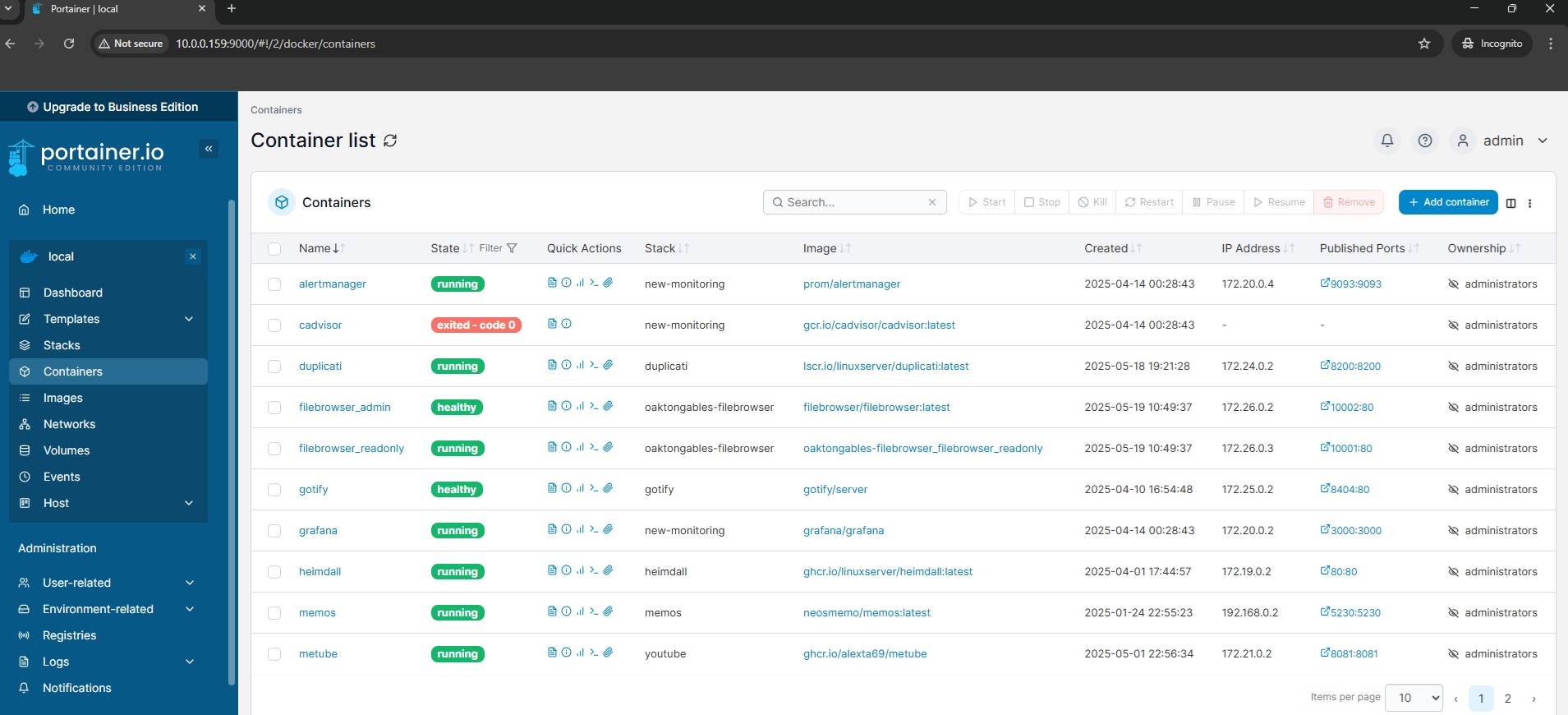

6) Portainer - I use it for managing my Docker containers, even thoguh I currently don't have many.. But in general it may come super helpful if I need to clean up old images or troubleshoot a container.

7) AWX - I run AWX on Kubernetes as a pod for running Ansible playbooks. I use some of the playbooks for

VMs

and physical servers patching, configuration management or timezone adjustment on VMs. I have automated task

schedulers that takes care of of my VMs config.

I originally ran AWX on a Red Hat VM, but it was pretty slow jobs would take ages. Moving it to Kubernetes

with

Helm made a big difference. Now jobs run much faster, and its easier to maintain. - Replaced with

Semaphore!

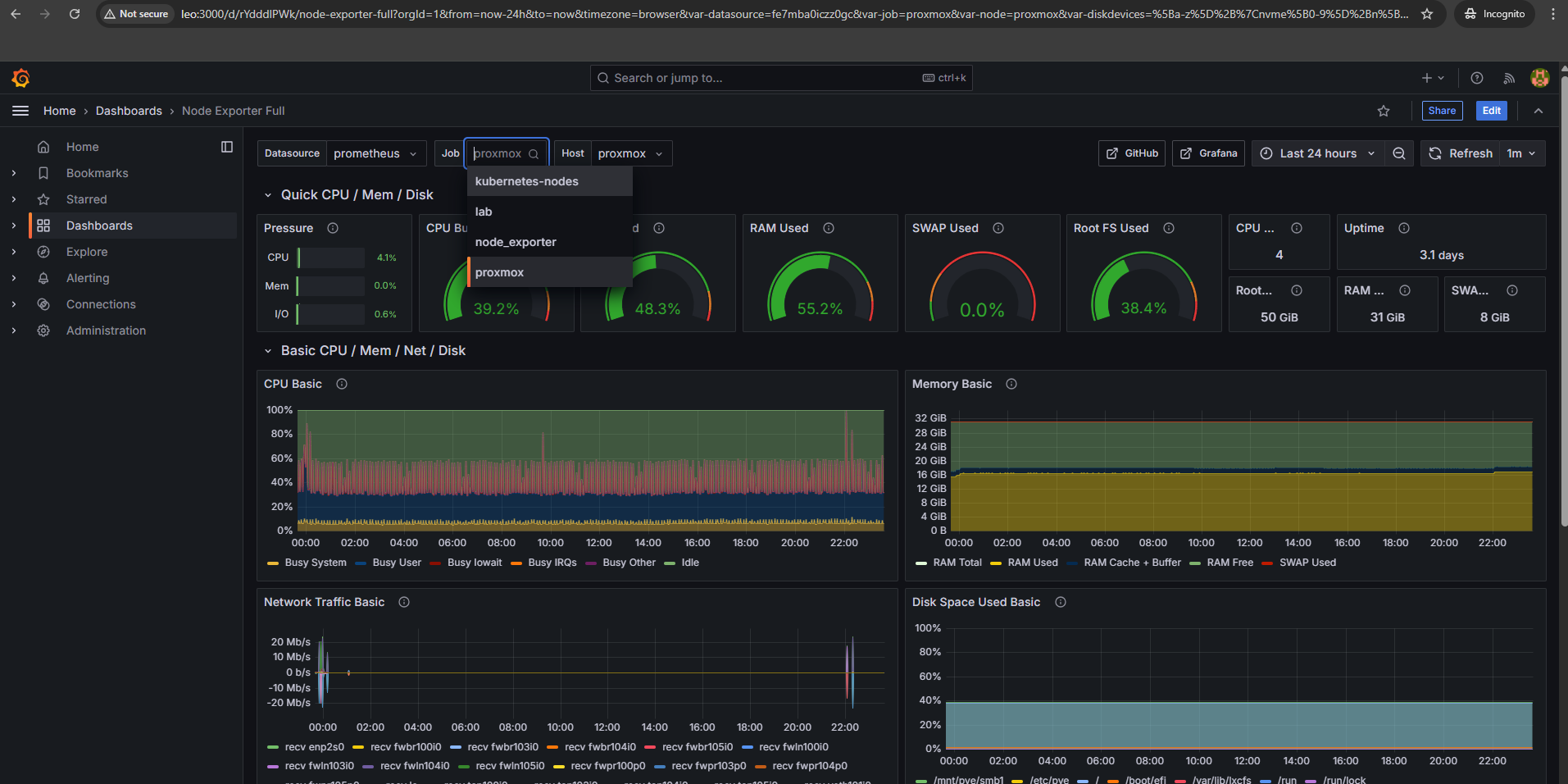

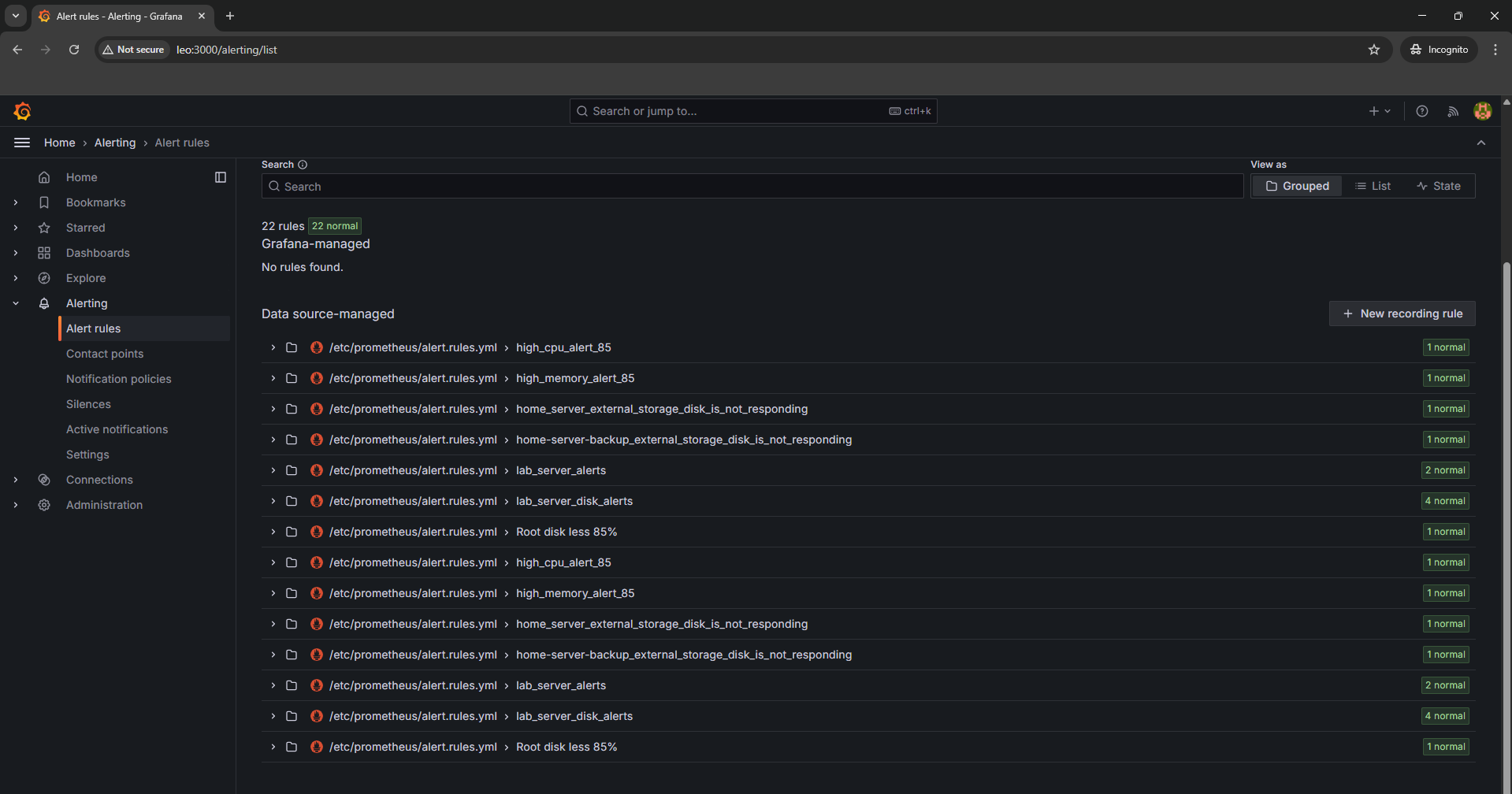

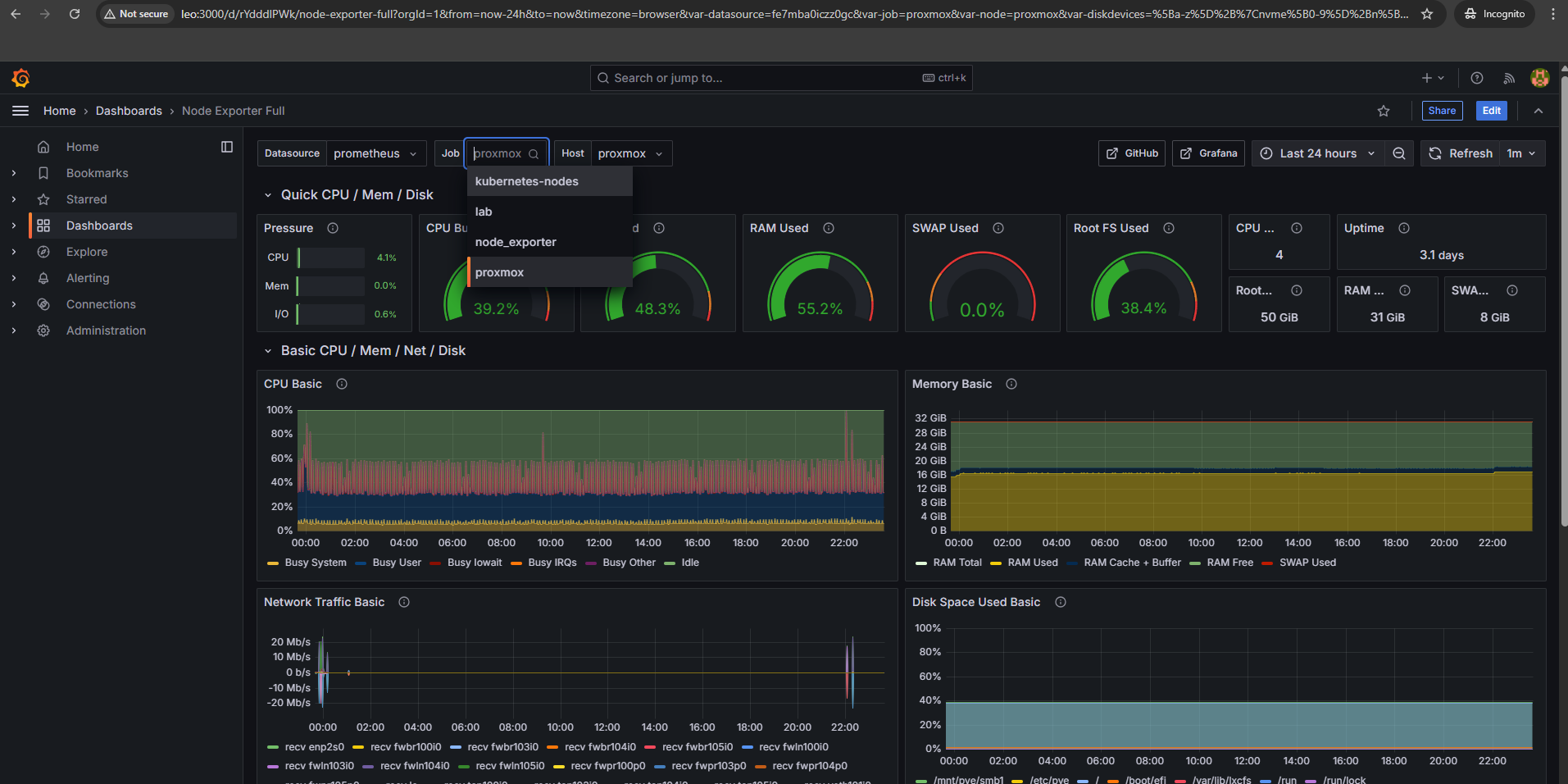

8) Grafana, Prometheus, Alertmanager - I use it for monitoring, alerting and visualization. I do have alert manager configured alerting for when my servers hit high cpu or memory. I originally ran this on docker-compose, but now I deploy the full kube-prometheus-stack on Kubernetes via Helm. Much easier to manage and comes with great default dashboards.

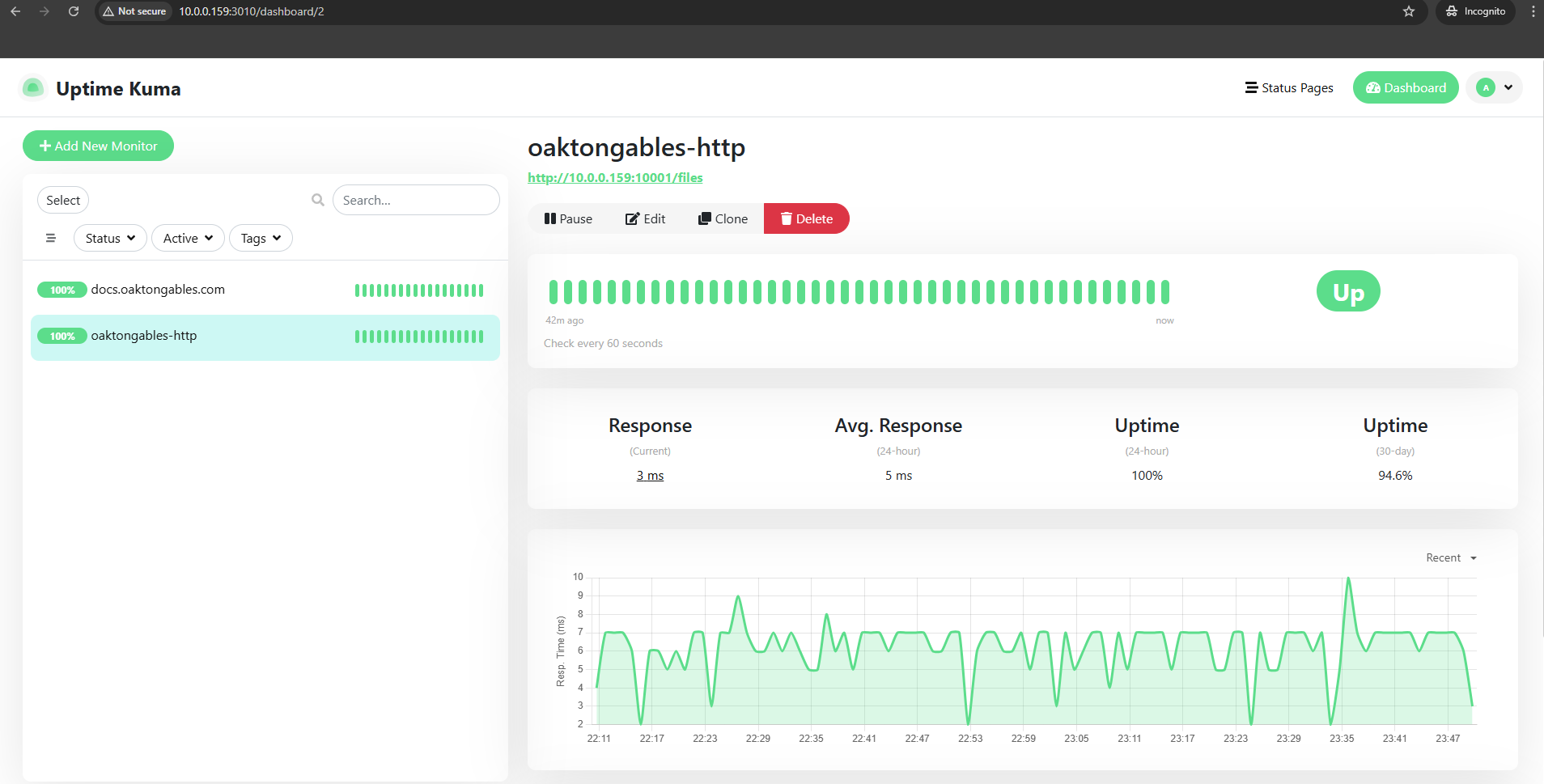

9) Uptime Kuma - Super easy to configure, just pings my services and lets me know if anything's down. I run it on Kubernetes now via Helm. Small tool, but super handy.

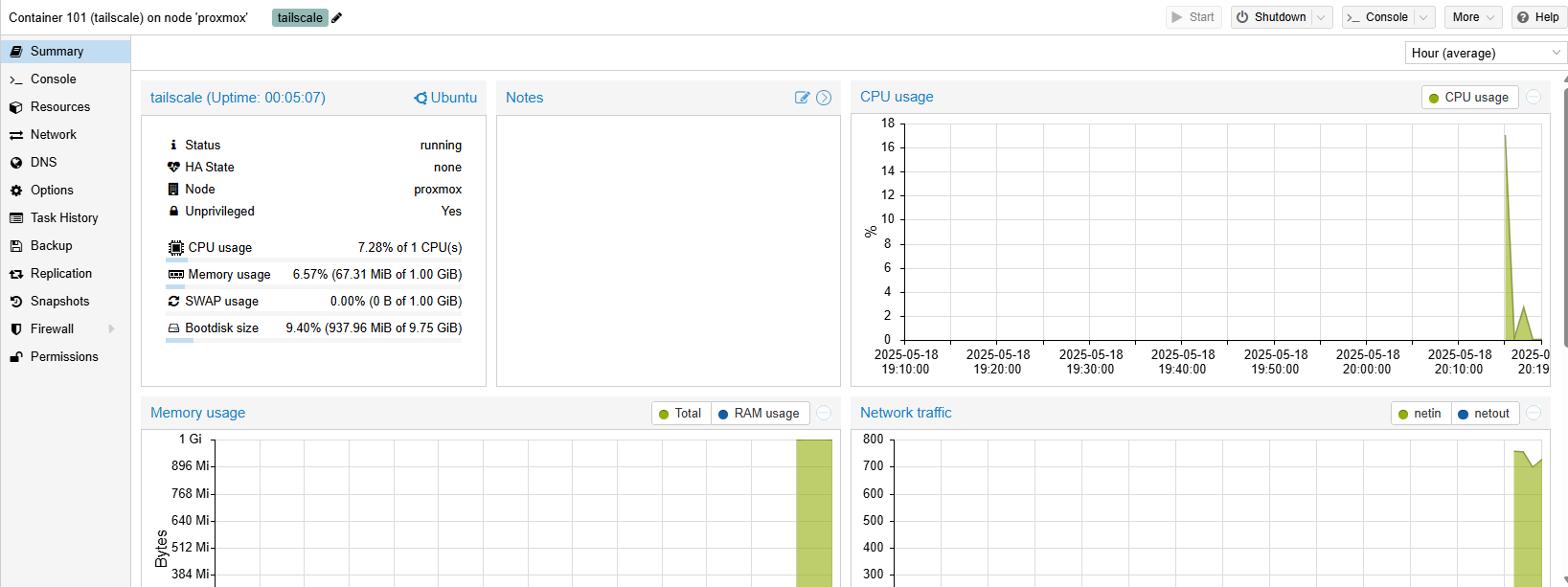

10) Tailscale - I use Tailscale VPN with one of the VM on my Proxmox cluster configured as an exit node. This allows me to access my local network from anywhere securely, without needing each individual device to be connected to the VPN, super useful when I'm traveling or working remotely.

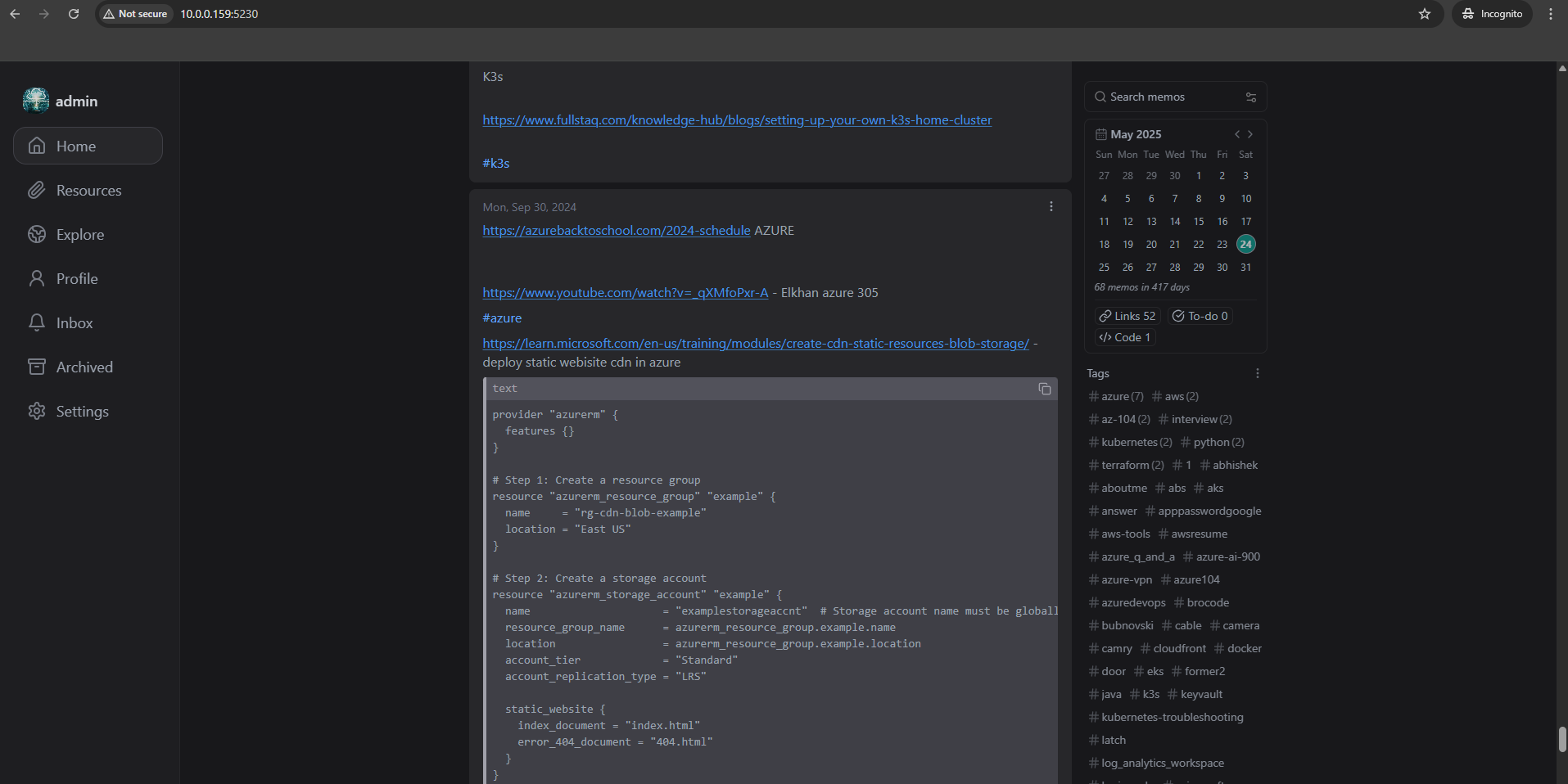

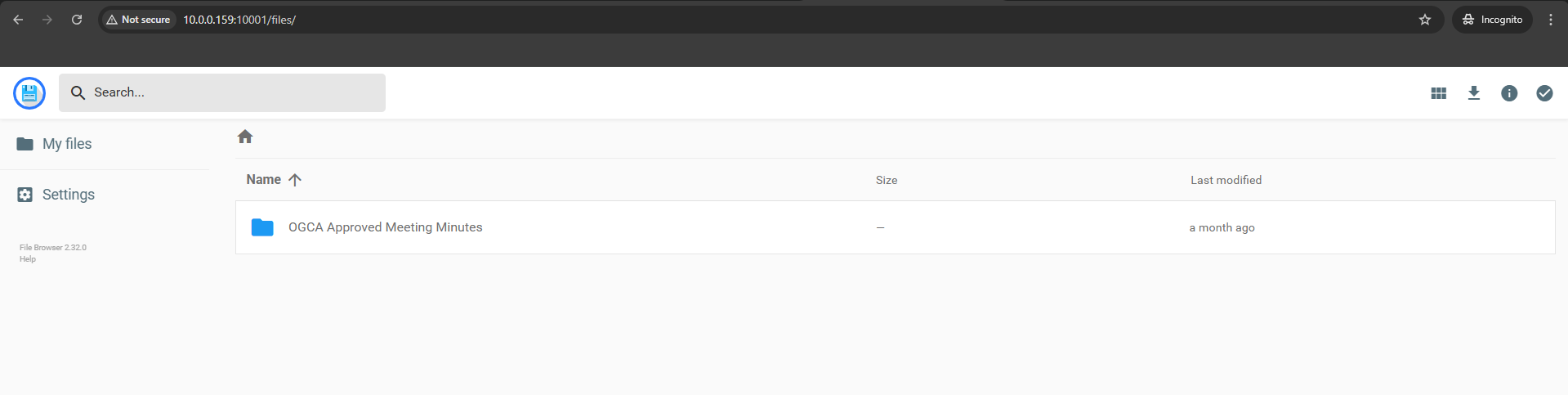

11) Memos, Vikunja (I use this for my Kanban To Do tasks) and Filebrowser these three apps are my go-to tools for staying organized, Memos for quick notes, Vikunja for task tracking, and Filebrowser for quick file sharing.

12) ntfy - A simple self-hosted push notification server.

I deploy it on Kubernetes via Helm and use it to get phone notifications when my cron backup jobs finish or fail

-

just a one-liner curl call at the end of each cron entry. I have the ntfy Android app on my phone,

and when I'm away from home I connect via Tailscale to reach my local ntfy instance. Simple and does exactly

what I need.

13) Helm Dashboard - A simple web

UI for managing my Helm releases on Kubernetes. Instead of running helm list and digging through

CLI output, I can see all releases, their status, values, and upgrade history in a browser. Handy for a quick

overview of what's deployed across namespaces.

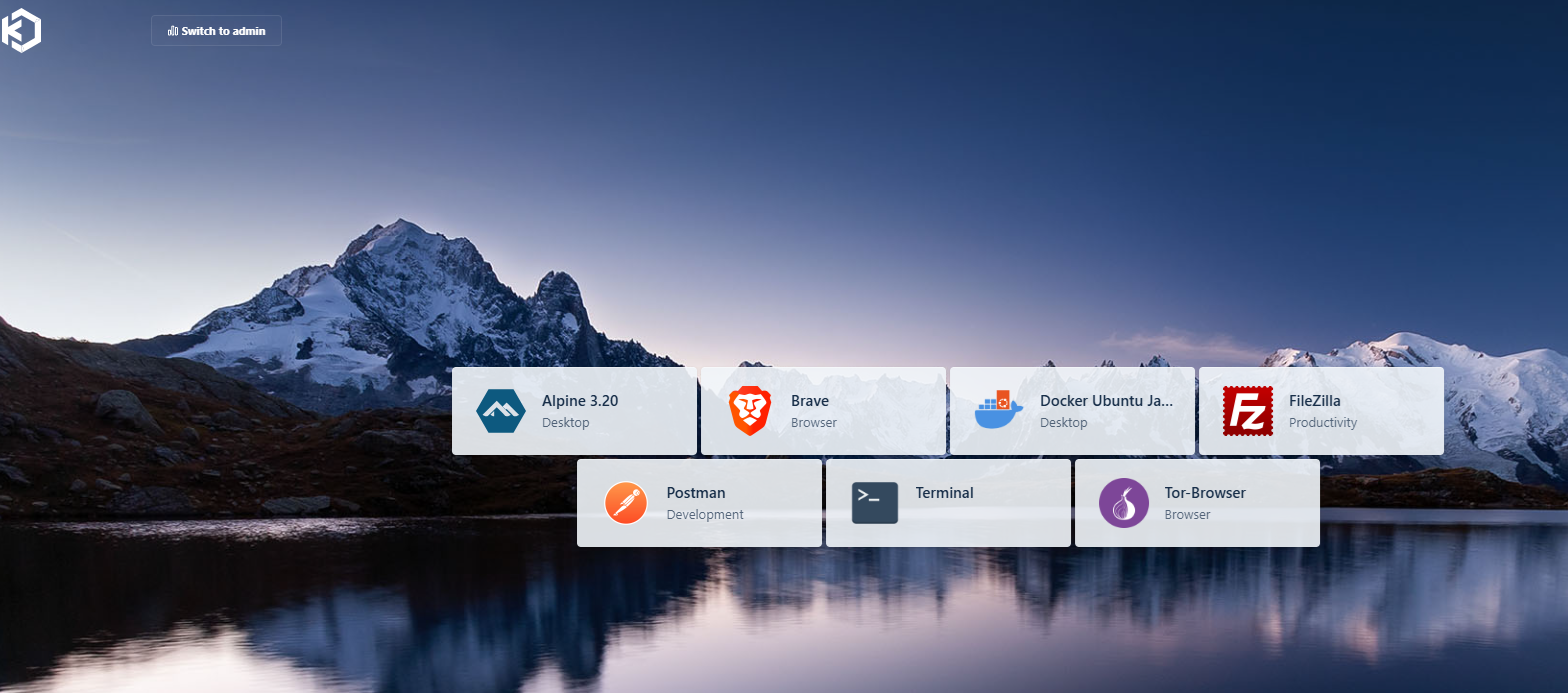

14) Kasm Workspaces - I's like being able to set up browser-accessible desktops whenever you need them. I use the free version for safely hopping onto websites, apps, or even full desktop environments, all wrapped up in isolated containers. When I'm done browsing or testing something new, I can start fresh without any leftovers. Plus, if I want to stay extra private, I just run Firefox or Opera with a VPN and then close the session. It's a nice way to keep things under wraps.

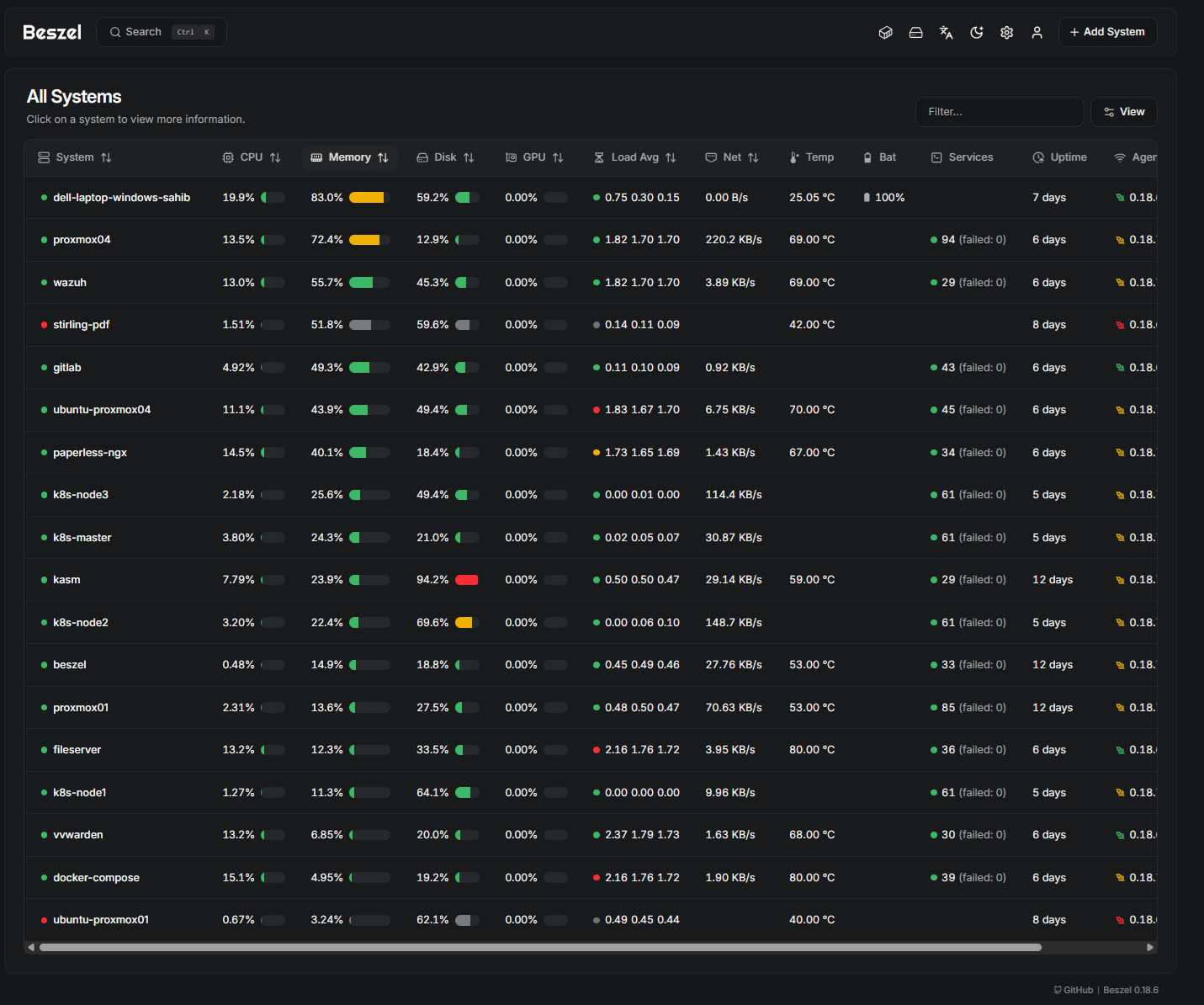

15) Beszel - A lightweight server monitoring tool that tracks CPU, memory, disk, and network usage across all my machines. It runs as an LXC container on Proxmox and collects metrics from agents deployed on each host. Simple to set up, low overhead, and gives me a quick overview of system health without the complexity of a full monitoring stack.

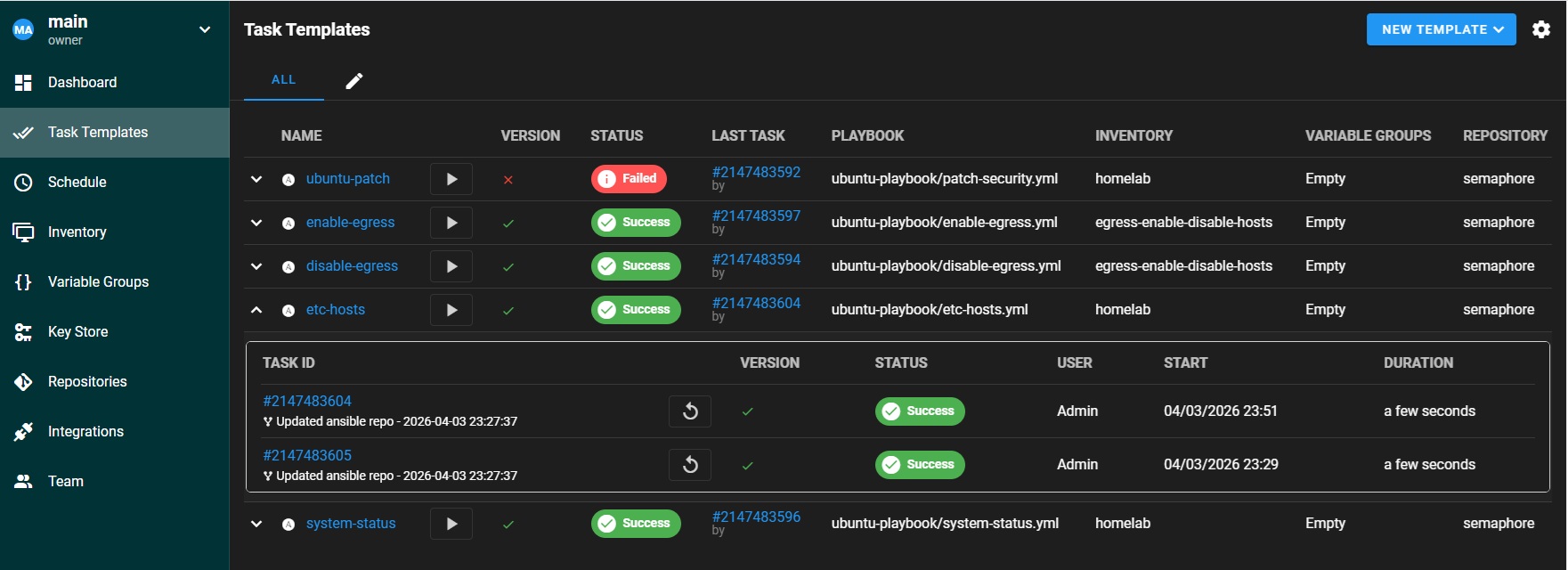

16) Semaphore - A web UI for running Ansible playbooks. I deploy it on Kubernetes via Helm and use it to schedule patching, config management, and maintenance tasks across all my VMs and LXC containers. It replaced AWX for most of my automation needs because it is much lighter and faster to deploy.

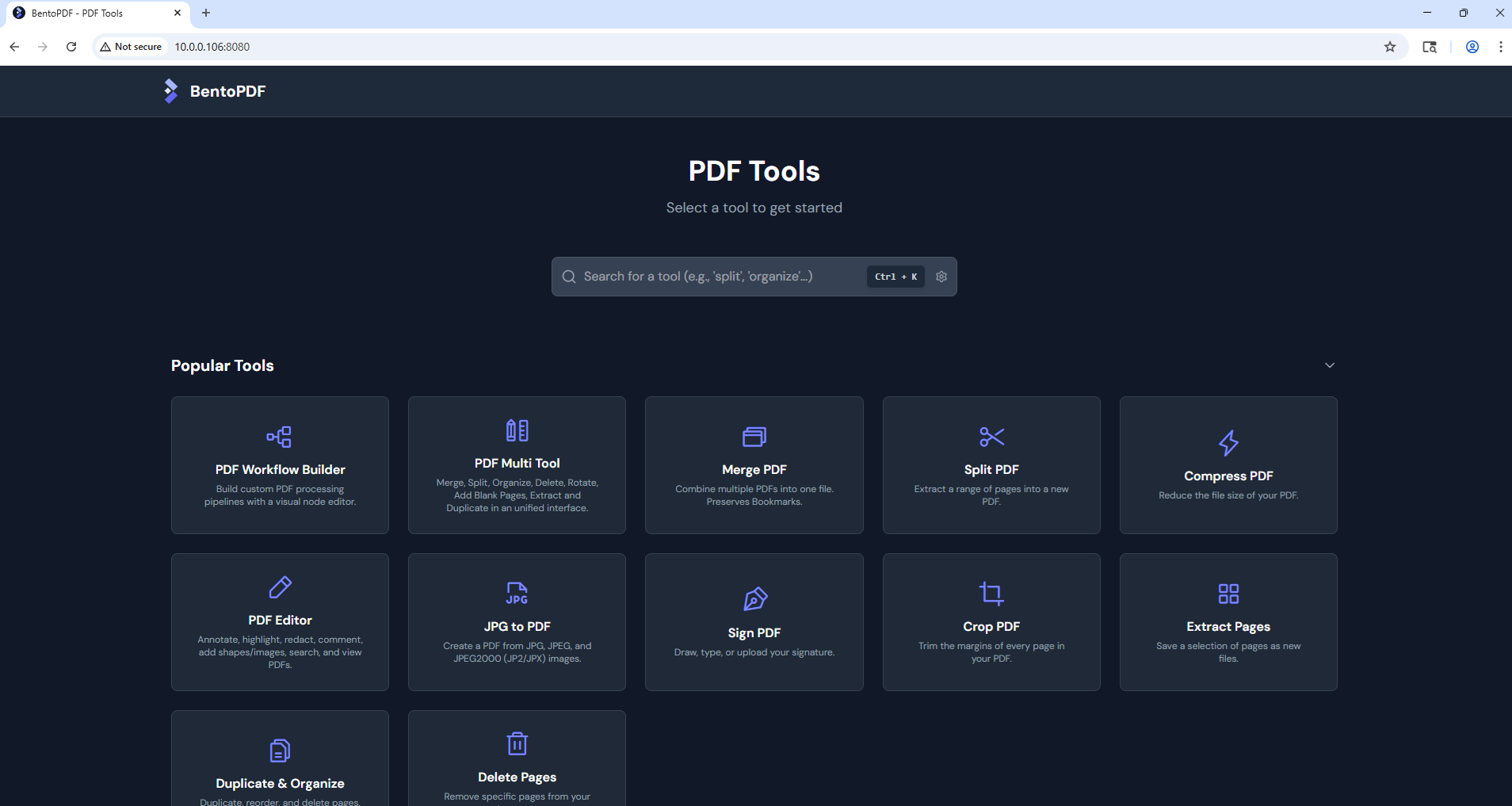

17) BentoPDF - A self-hosted PDF tool for merging, splitting, compressing, and converting documents. I run it as an LXC container on Proxmox. For privacy, I don't use free online PDF tools that upload files to unknown servers, and I don't want to pay for Adobe. BentoPDF keeps everything local.

Security and Networking Setup

Networking and security in a homelab is always a bit of a rabbit hole. I started simple just exposing a couple of services on ports, but after a few too many random bots hitting my open ports, I decided to tighten things up...

I use Cloudflare as my reverse proxy for anything web-based. It handles my three domains, manages SSL certificates, and listens on local Docker ports. I also run Cloudflare Tunnels to access certain services without needing to open any ports which makes life a lot simpler.

For internal apps, I started experimenting with Cloudflare Zero Trust to lock things down even further with it's policies.

My bastion server has DUO MFA for SSH access. I do use Tailscale exit node to access my local network when I need to connect remotely.

For secrets and passwords, I run Vaultwarden (a lightweight Bitwarden-compatible server) on Kubernetes via Helm. Syncs with my browsers and phone.

Other things I've put in place:

- Fail2Ban to block brute-force attempts on exposed ports (this alone catches a lot)

- UFW firewall on all VMs and Docker hosts. On data-sensitive servers like my fileserver and Vaultwarden, I block all outbound (egress) traffic by default. This way, even if something gets compromised, it cannot phone home. I only temporarily enable egress during patching using an Ansible playbook triggered through Semaphore, and disable it again once updates are done.

- Wildcard SSL via Cloudflare DNS for external services

Grafana Loki I use to pull in logs from the bastion and key servers, all centralized in Grafana.

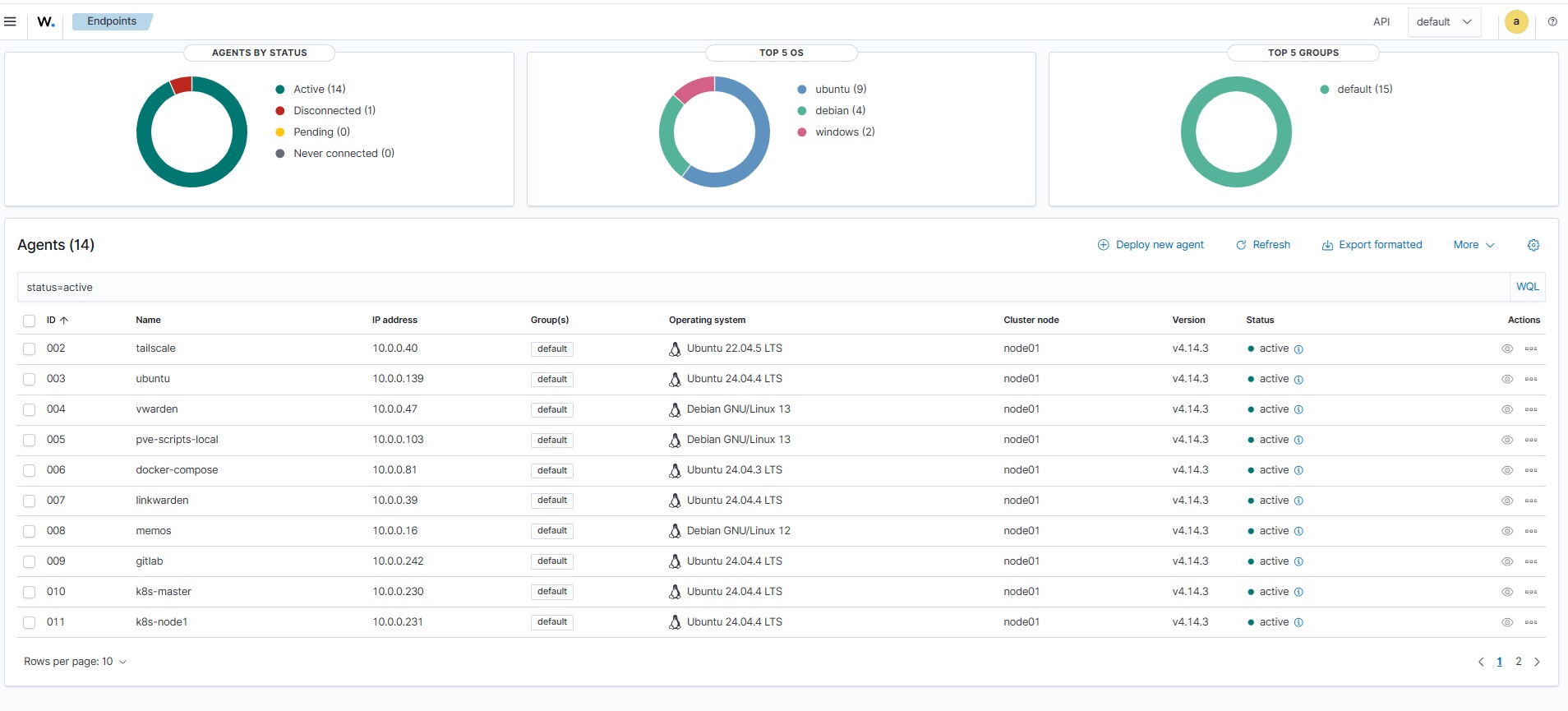

I also run Wazuh - an open source security platform for threat detection, integrity monitoring, and log analysis. It runs as a VM on Proxmox with agents on my servers and Kubernetes nodes. Gives me a centralized view of security events across the whole homelab - failed logins, file integrity changes, suspicious processes.

Load Balancing with

One challenge with running Kubernetes in a homelab is that there's no cloud provider to hand out external IPs for LoadBalancer services. MetalLB solved that problem for me, it's a bare-metal load balancer that gives services real IPs from a pool I define.

I deployed it via Helm and configured it in Layer 2 mode with an IP range from my home network. Now when I create a LoadBalancer service, MetalLB assigns it an IP automatically, all pods of that service are then accessible via that IP.

Monitoring Setup

My monitoring stack now runs on Kubernetes using kube-prometheus-stack deployed via Helm. It includes Prometheus, Grafana, Alertmanager, and various exporters. I also run node-exporter on my physical hosts and Proxmox nodes to collect system metrics.

The setup alerts me via email when things go wrong, high CPU, memory issues, disk space running low, or services going down. Helm makes it easy to manage and update the whole stack with a single values file.

All my Grafana dashboards are managed through IaC, no manual clicking around. Dashboards are defined in code and deployed automatically, so everything stays consistent and reproducible. I use cAdvisor for containers

Wrap-Up

Like any homelab, mine is a constant work-in-progress. I'm always trying new tools, breaking things, fixing them (sometimes), and learning along the way. There's plenty running in the background..

The latest addition is a local AI stack with Ollama running on GPU. I use it for document processing, coding assistance in VS Code, and general chat. The full write-up is in my Local AI with Ollama article. Next I want to explore AI-powered log analysis: piping Grafana Loki logs into Ollama for automated incident summarization and root-cause analysis, with alerts pushed to my phone via ntfy.